Threat actors are manipulating stolen images and videos using artificial intelligence (AI) to create deepfakes that show innocent people — including minor children and non-consenting adults — in fake but explicit sexual activity. It’s part of a growing new wave of sextortionist scams, the FBI is warning.

Victims of these scams increasingly are reporting to the agency that personal photos and videos of them that are posted on on their pages online are being manipulated and then publicly circulated on social media or pornographic websites, the FBI said in an advisory posted this week. Threat actors usually contact the victims to demand payment with money or gift cards in exchange for removing the images or refraining from sharing them with family members or friends. However, once the photos and videos are circulated, it’s difficult for victims to ensure that they are completely removed from the Web, the agency said, according to the FBI.

Since April, the FBI has observed an uptick in sextortion victims reporting the use of fake images or videos created from content posted on victims’ social media or other websites, it said.

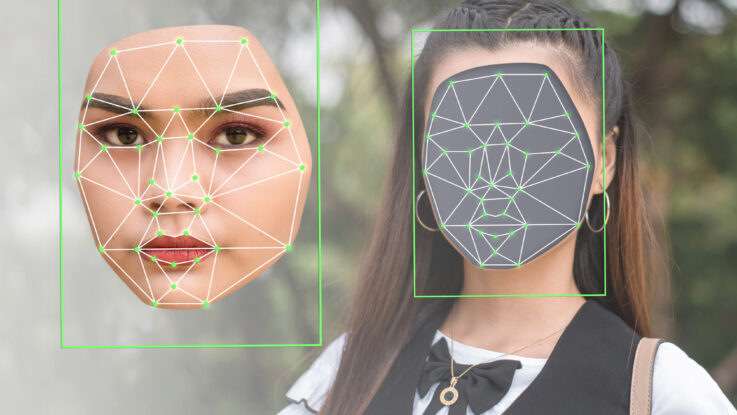

“Malicious actors use content manipulation technologies and services to exploit photos and videos … into sexually-themed images that appear true-to-life in likeness to a victim, then circulate them on social media, public forums, or pornographic websites,” according to the advisory.

Moreover, many of the victims didn’t even know that their images had been stolen, let alone used in the deepfakes and published online, until someone notified them of their existence, the agency noted.

Deepfakes Advance Sextortion Tactics

The FBI’s warning is yet more evidence how AI is changing the security landscape, with new technologies like deepfakes and ChatGPT posing advanced threats for users and organizations alike.

Sextortion scams are hardly new, but traditionally have been ones in which malicious actors either coerce victims into providing sexually explicit photos or videos of themselves, or steal private media from their phones or cloud storage. They then contact victims and threaten to expose the explicit content online, or to family and friends, unless the victims pay an extortion fee, or they harass people into complying with other demands, according to the FBI.

The emergence of deepfake technology — in which images and videos that seem real but aren’t can be created using AI — takes these scams to a whole new level. Threat actors no longer require that targets already have nude or sexually explicit videos or images of themselves, as they can now simply create them by using any photo or video of the victim posted online.

Some security experts already predicted that sextortion would be on the rise this year, with targets extending beyond the typical high-profile business executive and public figure to basically anyone with data stored in a place that attackers can reach.

Moreover, these threats often extend to the friends and family of more publicly recognizable people, with attackers using personal or sexually explicit info or images of loved ones to force them into meeting demands, ransom or otherwise, experts said.

Defending Against Scams

Sextortion scams can have ramifications not only for personal technology users, but also for organizations in cases where business leaders are targeted and face reputational implications and potential harm to their respective businesses if explicit content is exposed. Sextortionists also can coerce business leaders to make potentially unwise business decisions — such as exposing corporate data — as part of their demands when they target them with compromising photos or videos, which can even be gleaned from corporate “about us” pages.

To avoid being targeted or compromised, the FBI urges everyone to exercise caution when posting or direct-messaging personal photos, videos, and identifying information on social media, dating apps, and other online sites, they said.

“Although seemingly innocuous when posted or shared, the images and videos can provide malicious actors an abundant supply of content to exploit for criminal activity,” according to the advisory.

To protect children from being targeted, parents and guardians of children should monitor their online activity and discuss the risks associated with sharing content online, particularly on social media platforms like TikTok and Snapchat that are popular communication methods for young people.

Adults, too, should generally “use discretion when posting images, videos, and personal content online, particularly those that include children or their information,” the agency said. They also should run frequent searches of their own and their children’s personal info — including full name, address, phone number etc. — to help identify the exposure and spread of personal information on the Internet.

Applying strict privacy settings on social-media accounts to limit public exposure of personal and private images and information also can help people avoid targeting by sextortionists, the agency said.