Facebook, Microsoft and a number of universities have joined forces to sponsor a contest promoting research and development to combat deepfakes, or videos altered through artificial intelligence (AI) to mislead viewers.

The two tech giants—along with the Partnership on AI and academics from Cornell Tech, MIT, University of Oxford, UC Berkeley, University of Maryland, College Park and University at Albany-SUN–have created the Deepfake Detection Challenge (DFDC), which aims to spur the industry to create technology that can detect and prevent deepfakes, according to a Facebook blog post attributed to company CTO Mike Schroepfer.

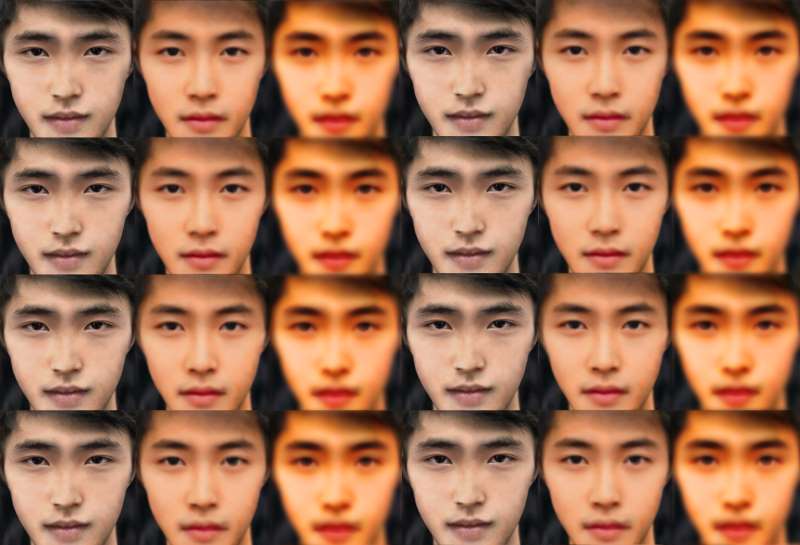

Deepfake techniques use AI in such a way that videos of real people are altered so they appear to do and say things that are not real to present a distorted version of reality. While this technology currently exists and is rapidly advancing, industry itself doesn’t yet have a data set or benchmark for how to detect when it’s being used—hence the contest, Schroepfer wrote.

In the first known case of successful financial scamming via audio deepfakes, in March cybercrooks were able to create a near-perfect impersonation of a chief executive’s voice – and then used the audio to fool his company into transferring $243,000 to their bank account.

In the first known case of successful financial scamming via audio deepfakes, in March cybercrooks were able to create a near-perfect impersonation of a chief executive’s voice – and then used the audio to fool his company into transferring $243,000 to their bank account.

“We want to catalyze more research and development in this area and ensure that there are better open-source tools to detect deepfakes,” Schroepfer wrote.

To do this, DFDC will include a data set and leaderboard, as well as offer grants and awards for producing technology that can prevent and detect deepfake videos.

The Partnership on AI’s new Steering Committee on AI and Media Integrity—which includes Facebook, WITNESS and Microsoft as well as other representatives from technology, media and academia–will oversee the governance of the challenge.

Deepfakes created quite a public stir recently thanks to a viral video in which actor Bill Hader was seen doing an impression of fellow actors Tom Hanks and Seth Rogen. The video was altered in such a way that Hader’s facial appearance took on that of Cruise’s and Rogen’s during the respective impressions.

The video demonstrated how technology is pushing the boundaries of what reality is and what is being presented as reality, something especially disturbing in the current U.S. socio-political climate and era of so-called “fake news.”

Detecting and preventing deepfakes is especially crucial for social media companies such as Facebook, Microsoft, Google, Twitter and others, the platforms of which are used to create and distribute such videos.

Indeed, while images have been manipulated almost as long as photography existed, “it’s now possible for almost anyone to create and pass off fakes to a mass audience,” Antonio Torralba, professor of electrical engineering and computer science at MIT, said in a press statement.

“The goal of this competition is to build AI systems that can detect the slight imperfections in a doctored image and expose its fraudulent representation of reality,” said Torralba, who also is director of the MIT Quest for Intelligence.

For its part, Facebook is contributing $10 million to the challenge. Moreover, mindful of its own issues with data privacy, the company won’t be using data from its own platform in the DFDC data set and instead will commission a realistic data set from “paid actors,” Schroepfer wrote.

DFDC sponsors will test the data set and challenge parameters during a meeting at the International Conference on Computer Vision (ICCV) in October. Once finalized, they will release a full data and launch DFDC at the Conference on Neural Information Processing Systems (NeurIPS) in December.